Operationalizing AI Systems for Enterprise Scale

AI ModelOps Enablement helps enterprises move from AI experimentation to reliable, production-scale AI systems.

While many organizations successfully build AI prototypes, they struggle to operationalize them. Challenges emerge around deployment, monitoring, governance, cost control, and system ownership.

This service focuses on building the engineering and operational foundation required to deploy, run, monitor, and evolve AI, GenAI, and Agentic AI systems in real enterprise environments.

Why Enterprises Need ModelOps Enablement

AI prototypes are relatively easy to build. Production AI is not.

Enterprises commonly face:

- Lack of deployment pipelines for models and GenAI systems

- No monitoring for model drift, performance degradation, or bias

- Absence of versioning, rollback, and lifecycle management

- Unclear ownership between data science and engineering teams

- Rising costs and unpredictable behavior in production

These gaps prevent AI systems from scaling reliably and delivering sustained business value.

What AI ModelOps Enablement Deliverss

AI ModelOps Enablement establishes the end-to-end operational backbone required for enterprise AI systems.

It ensures that AI systems are:

- Deployable across environments

- Observable and measurable in real time

- Governed and compliant

- Cost-controlled and optimized

- Continuously evolving

- Support for GenAI and Agentic AI systems across deployment and lifecycle management

The focus is not on tools, but on engineering AI systems that can operate reliably under real-world conditions.

How ModelOps Enablement Works

This service builds and integrates the core components required to operationalize AI systems.

End-to-End AI and GenAI Pipelines

Design and implement production-grade pipelines for deploying and managing AI and LLM-powered systems.

- Secure model packaging and deployment workflows

- Environment-aware release management across development stages

- Rollback and recovery mechanisms

LLM Orchestration and Routing

Enable intelligent routing across multiple models and providers based on cost, performance, and risk.

- Dynamic selection of LLM providers s

- Optimization for latency, cost, and reliability

- Support for hybrid and multi-model architectures

Enterprise Observability for AI Systems

Implement real-time visibility into AI system behavior.

- Output monitoring and validation

- Cost and token usage tracking

- Cost and token usage tracking

- System health and reliability metrics

Guardrails and Safety Engineering

Design and implement control layers for safe AI operation.

- Prompt validation and sanitization

- Policy enforcement mechanisms

- Risk and safety controls aligned with enterprise requirements

- Integration with red teaming and testing outcomes

Fine-Tuning and Model Adaptation

Enable secure and compliant model customization where required.

- Controlled fine-tuning environments

- Data governance and compliance enforcement

- Model adaptation based on business-specific needs

Built on the NEXUS AI Framework

AI ModelOps Enablement is delivered within the NEXUS AI framework, ensuring:

- Lifecycle discipline from deployment to operations

- Embedded governance, auditability, and

- Observability and control across AI systems

- Alignment with enterprise architecture and platform standards

This ensures AI systems are not just deployed, but managed and evolved as enterprise systems.

Business Outcomes

AI ModelOps Enablement delivers measurable operational impact:

Reduces delays between experimentation and real-world deployment

Ensures consistent performance across environments and over time

Enables traceability, auditability, and policy enforcement

Early detection of drift, failures, and performance degradation

Tracks and optimizes usage of AI systems and LLMs

Aligns data science, engineering, and operations teams

When to Use AI ModelOps Enablement

This service is best suited for organizations that:

- Have AI or GenAI prototypes but cannot scale them

- Are deploying LLM-powered applications into production

- Need governance, monitoring, and control for AI systems

- Are experiencing performance, cost, or reliability issues

- Want to standardize AI deployment and operations across teams

- Are building Agentic AI systems that require orchestration and control

What Makes This Different

AI ModelOps Enablement is positioned as an engineering capability focused on operating AI systems reliably in production environments.

Designed for end-to-end operationalization across deployment, monitoring, governance, and lifecycle management

Focused on system reliability, cost control, and performance at scale

Bridges the gap between data science experimentation and enterprise engineering standards

Built to support continuous evolution of AI systems rather than one-time deployment

Delivered in a platform-agnostic manner, enabling flexibility across cloud and AI ecosystems

This ensures that AI systems are not only deployed, but consistently managed, controlled, and optimized in real-world enterprise conditions.

Make AI Work in Production

AI ModelOps Enablement ensures that AI systems are not just built, but operate reliably at enterprise scale.

Case Studies

Explore how NetWeb is delivering AI-powered transformation across industries—from healthcare and manufacturing to enterprise automation—through real-world applications of Generative AI, ML, and autonomous agents.

Human Posture Detection

AI Based Virtual Assistant

Long Care Patient Medical Summary

AI Powered Ambient Listening

Human Capabilities Analyzer

Advanced Gen AI based ChatBot powered by OpenAI's GPT-3

Label Printing Quality Inspection

Tyre Defect Detection

Remote Support & Predictive Maintenance App

High Speed Bearing Shield

Rubber Bush/O-Ring Vision Inspection Machine Using AI

Human Capabilities Analyzer

Sentiment Analysis

Video Analysis for Sports Game

Insurance Risk Predictor with Dynamic Form Creation

Object detection: Broken/ Intact Mobile Screen Detection

Object recognition using face detection: Face Mask Detection

Emotion Detection on Human’s face expressions

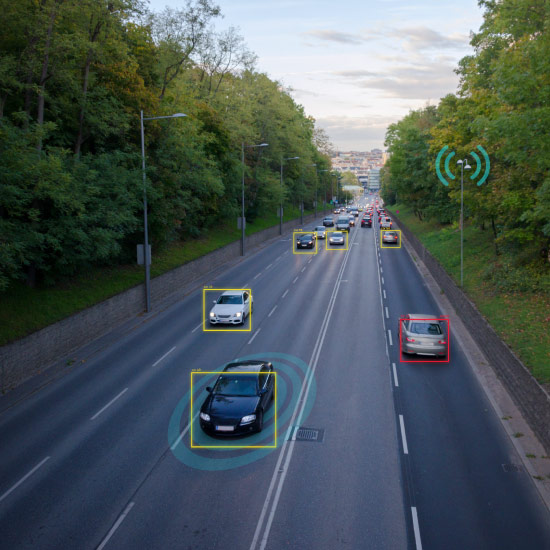

Computer Vision For Traffic Management

Sentiment Analysis and Prediction Tool

Automated Predictive Tool For Policy Intelligence

Let’s Start a Conversation

We’d love to learn more about your goals and how we can help. Share your details, and we’ll be in touch shortly.

Thank you for reaching out to NetWeb.