Engineering Reliable, Predictable, and Cost-Efficient AI Interactions

AI Interaction & LLM Optimization focuses on improving how applications, users, and workflows interact with Large Language Models to deliver consistent, accurate, and business-aligned outcomes.

Many enterprises successfully deploy GenAI solutions at a pilot level but struggle to achieve reliability, predictability, and cost efficiency at scale. This service addresses that gap by treating AI interactions as engineered system components, not ad hoc configurations.

The result is AI that behaves consistently across use cases, users, and environments.

Why This Matters

Many GenAI systems perform well in controlled pilots but fail to deliver consistent results when exposed to real-world usage. This gap is driven by how AI interactions are designed and managed, not by the models themselves.

Common challenges include:

Prompts created during experimentation without structure or governance

Inconsistent outputs across similar inputs and workflows Lack audit trails and traceability

Hallucinations and variability in responses

Rising costs due to inefficient prompt design and repeated interactions

Lack of control over how AI behavior evolves over time

These issues reduce trust in AI systems and limit their ability to support real business workflows.

What This Service Delivers

AI Interaction & LLM Optimization enables organizations to stabilize, optimize, and govern AI behavior across enterprise use cases.

It ensures that:

- AI interactions are aligned with business context and workflows

- Outputs are consistent, accurate, and predictable

- Interaction patterns are structured for reliability

- Costs are controlled through efficient usage of models

- AI behavior can be managed, tested, and evolved over time

The focus is on making AI systems dependable in real-world enterprise conditions.

How It Works

This service applies structured engineering practices to optimize AI interactions and system behavior.

Business-Aligned Interaction Design

Design prompts and instructions aligned to enterprise workflows and domain context.

- Define role-specific interaction patterns

- Incorporate business rules and contextual constraints

- Align outputs with operational requirements

LLM Interaction Pattern Engineering

Optimize how systems interact with LLMs across workflows.

- Implement structured interaction flows such as prompt chaining

- Define memory usage and context handling strategies

- Design tool invocation and response orchestration patterns

Enterprise Prompt Asset Standardization

Establish governed and reusable prompt assets.

- Create versioned prompt configurations tied to business functions

- Treat prompts as managed system artifacts

- Enable consistency across teams and use cases

Performance Assessment and Optimization

Continuously evaluate and refine AI interaction performance.

- Measure accuracy, consistency, latency, and cost

- Identify failure modes and variability patterns

- Optimize prompts and interaction flows for stability and efficiency

Built on the NEXUS AI Framework

AI Interaction & LLM Optimization is delivered within the NEXUS AI framework, ensuring:

- Structured management of AI interaction layers

- Integration with ModelOps, monitoring, and governance systems

- Continuous evaluation and improvement of AI behavior

- Alignment with enterprise architecture and operational controls

This ensures that AI interactions are not ad hoc, but engineered, governed, and continuously optimized.

Key Capabilities

Business-context-aware prompt and instruction engineering

Structured LLM interaction and workflow design

Prompt chaining, memory, and tool orchestration patterns

Versioned and governed prompt asset management

Performance monitoring and optimization

Cost and efficiency optimization across AI usage

Business Outcomes

AI Interaction & LLM Optimization delivers measurable enterprise value:

Reduces hallucinations and variability in outputs

Aligns responses with domain and workflow context

Optimizes token usage and reduces rework

Enables versioning, testing, and auditability of AI interactions

Enhances performance without requiring new models

When to Use AI Interaction & LLM Optimization

This service is best suited for organizations that:

- Have GenAI applications in production with inconsistent outputs

- Experience high costs or inefficiencies in AI usage

- Need to improve accuracy and reliability of AI responses

- Want to standardize AI interactions across teams and use cases

- Are scaling Agentic AI systems that require controlled behavior

- Need better governance over AI interaction patterns

What Makes This Different

AI Interaction & LLM Optimization focuses on engineering AI behavior for reliability, consistency, and efficiency at scale.

Treats AI interactions as structured, governed system components

Aligns prompts and interaction flows with business workflows and context

Focuses on stabilizing AI behavior rather than experimenting with prompts

Enables consistent outcomes across users, use cases, and environments

Optimizes performance and cost without changing underlying models

This ensures that AI systems move from unpredictable responses to dependable, enterprise-ready behavior.

Make AI Systems Reliable at Scale

AI Interaction & LLM Optimization helps organizations improve accuracy, reduce cost, and ensure consistent AI behavior across enterprise use cases.

Case Studies

Explore how NetWeb is delivering AI-powered transformation across industries—from healthcare and manufacturing to enterprise automation—through real-world applications of Generative AI, ML, and autonomous agents.

Human Posture Detection

AI Based Virtual Assistant

Long Care Patient Medical Summary

AI Powered Ambient Listening

Human Capabilities Analyzer

Advanced Gen AI based ChatBot powered by OpenAI's GPT-3

Label Printing Quality Inspection

Tyre Defect Detection

Remote Support & Predictive Maintenance App

High Speed Bearing Shield

Rubber Bush/O-Ring Vision Inspection Machine Using AI

Human Capabilities Analyzer

Sentiment Analysis

Video Analysis for Sports Game

Insurance Risk Predictor with Dynamic Form Creation

Object detection: Broken/ Intact Mobile Screen Detection

Object recognition using face detection: Face Mask Detection

Emotion Detection on Human’s face expressions

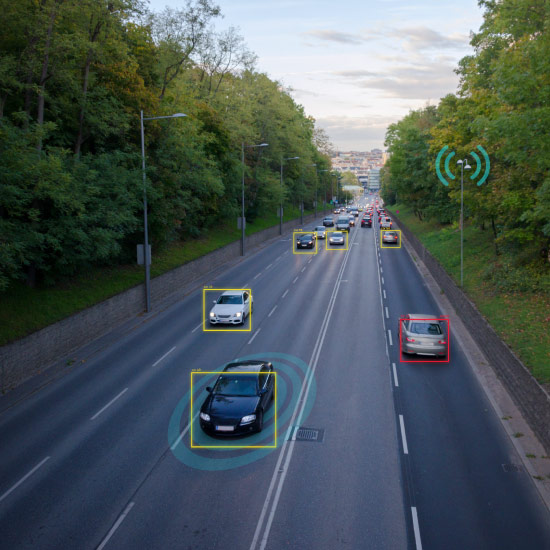

Computer Vision For Traffic Management

Sentiment Analysis and Prediction Tool

Automated Predictive Tool For Policy Intelligence

Let’s Start a Conversation

We’d love to learn more about your goals and how we can help. Share your details, and we’ll be in touch shortly.

Thank you for reaching out to NetWeb.